Security tools are confusing to use but they are even worse to install. You often get stuck installing development packages and loads of dependencies just to get one working. Most of the tools are written by a single person trying to get something out the door fast. And most security tools want advanced privileges so they can craft packets with ease or install `-dev` packages themselves.

The traditional answer to this was either to install all the things and just accept the sprawl and privilege escalation, or install them in a VM to segregate them. VMs are great for some use cases but they feel non-native and not developer friendly as part of an open source toolchain.

I am familiar with this problem intimately as I am on the core team for Gauntlt. Gauntlt is an open source security tool that–are you ready?–runs other security tools. In addition to Gauntlt needing its own dependencies like ruby and a handful of ruby gems, it also needs other security attack tooling installed to provide value. For people just getting started with Gauntlt, we have happily bundled all this together in a nice virtualbox (see gauntlt-starter-kit) that gets provisioned via chef. This has been a great option for us as it allows first-timers the ability to download a completely functioning lab. When teaching gauntlt workshops and training classes we use the gauntlt starter kit.

The problem with the VM lifestyle is that while it’s great for a canned demo, it doesn’t expand to the real world so nicely.

Let’s Invoke Docker

While working with Gauntlt, we have learned that Gauntlt is fun for development but it works best when it sits in your Continuous Integration stack or in your delivery pipeline. For those familiar with docker, you know this is one thing that docker particularly excels at. So let’s try using docker as the delivery mechanism for our configuration management challenge.

In this article, we will walk through the relatively simple process of turning out a docker container with Gauntlt and how to run Gauntlt in a docker world. Before we get into dockering Gauntlt, lets dig a bit deeper into how Gauntlt works.

Intro to Gauntlt

Gauntlt was born out of a desire to “be mean to your code” and add ruggedization to your development process. Ruggedization may be an odd way to phrase this, so let me explain. Years ago I found the Rugged Software movement and was excited. The goal has been to stop thinking about security in terms of compliance and a post-development process, but instead to foster creation of rugged code throughout the entire development process. To that end, I wanted to have a way to harness actual attack tooling into the development process and build pipeline.

Additionally, Gauntlt hopes to provide a simple language that developers, security and operations can all use to collaborate together. We realize that in the effort for everyone to do “all the things,” that no single person is able to cross these groups meaningfully without a shared framework. Chef and puppet crossed the chasm for dev and ops by adding a DSL, and Gauntlt is an attempt to do the same thing for security.

How Gauntlt Works

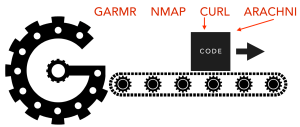

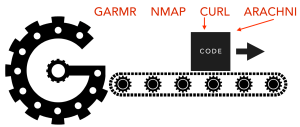

Gauntlt runs attacks against your code. It harnesses attack tools and runs them against your application to look for things like XSS or SQL Injection or even insecure configurations.

Gauntlt provides simple primitives to wrap attack tooling and parse the output. All of that logic is contained in what Gauntlt calls attack files. Gauntlt runs these files and exits with a pass/fail and returns a meaningful exit code. This makes Gauntlt a prime candidate for chaining into your CI/CD pipeline.

Anatomy of an Attack File

Attack files owe their heritage to the cucumber testing framework and its gherkin language syntax. In fact Gauntlt is built on top of cucumber so if you are familiar with it then Gauntlt will be really easy to grok. To get a feel for what an attack file looks like, let’s do a simple attack and check for XSS in an application.

Feature: Look for cross site scripting (xss) using arachni against example.com

Scenario: Using arachni, look for cross site scripting and verify no issues are found

Given "arachni" is installed

And the following profile:

| name | value |

| url | http://example.com |

When I launch an "arachni" attack with:

"""

arachni --checks=xss --scope-directory-depth-limit=1

"""

Then the output should contain "0 issues were detected."

Feature is the top level description of what we are testing, Scenario is the actual execution block that gets run. Below that there is Given-When-Then which is the plain English approach of Gherkin. If you are interested, you can see lots of examples of how to use Gauntlt in gauntlt/gauntlt-demo inside the examples directory.

For even more examples, we (@mattjay and @wickett) did a two hour workshop at SXSW this year on using Gauntlt and here are the slides from that.

Downsides to Gauntlt

Gauntlt is a ruby application and the downside of using it is that sometimes you don’t have ruby installed or you get gem conflicts. If you have used ruby (or python) then you know what I mean… It can be a major hassle. Additionally, installing all the attack tools and maintaining them takes time. This makes dockerizing Gauntlt a no-brainer so you can decrease your effort to get up and running and start recognizing real benefits sooner.

Dockerizing an Application Is Surprisingly Easy

In the past, I used docker like I did virtual machines. In retrospect this was a bit naive, I know. But, at the time it was really convenient to think of docker containers like mini VMs. I have found the real benefit (especially for the Gauntlt use-case) is using containers to take an operating system and treat it like you would treat an application.

My goal is to be able to build the container and then mount my local directory from my host to run my attack files (*.attack) and return exit status to me.

To get started, here is a working Dockerfile that installs gauntlt and the arachni attack tool (you can also get this and all other code examples at gauntlt/gauntlt-docker):

FROM ubuntu:14.04

MAINTAINER james@gauntlt.org

# Install Ruby

RUN echo "deb http://ppa.launchpad.net/brightbox/ruby-ng/ubuntu trusty main" > /etc/apt/sources.list.d/ruby.list

RUN apt-key adv --keyserver keyserver.ubuntu.com --recv-keys C3173AA6

RUN \

apt-get update && \

apt-get install -y build-essential \

ca-certificates \

curl \

wget \

zlib1g-dev \

libxml2-dev \

libxslt1-dev \

ruby2.0 \

ruby2.0-dev && \

rm -rf /var/lib/apt/lists/*

# Install Gauntlt

RUN gem install gauntlt --no-rdoc --no-ri

# Install Attack tools

RUN gem install arachni --no-rdoc --no-ri

ENTRYPOINT [ "/usr/local/bin/gauntlt" ]

Build that and tag it with docker build -t gauntlt . or use the build-gauntlt.sh script in gauntlt/gauntlt-docker. This is a pretty standard Dockerfile with the exception of the last line and the usage of ENTRYPOINT(1). One of the nice things about using ENTRYPOINT is that it passes any parameters or arguments into the container. So anything after the containers name gauntlt gets handled inside the container by /usr/local/bin/gauntlt.

I decided to create a simple binary stub that I could put in /usr/local/bin so that I could invoke the container wherever. Yes, there is no error handling, and yes since it is doing a volume mount this is certainly a bad idea. But, hey!

Here is simple bash stub that can call the container and pass Gauntlt arguments to it.

#!/usr/bin/env bash

# usage:

# gauntlt-docker --help

# gauntlt-docker ./path/to/attacks --tags @your_tag

docker run -t --rm=true -v $(pwd):/working -w /working gauntlt $@

Putting It All Together

Let’s run our new container using our stub and pass in an attack we want it to run. You can run it without the argument to the .attack file as Gauntlt searches all subdirectories for anything with that extension, but lets go ahead and be explicit. $ gauntlt-docker ./examples/xss.attack generates this passing output.

@slow

Feature: Look for cross site scripting (xss) using arachni against scanme.nmap.org

Scenario: Using arachni, look for cross site scripting and verify no issues are found # ./examples/xss.attack:4

Given "arachni" is installed # gauntlt-1.0.10/lib/gauntlt/attack_adapters/arachni.rb:1

And the following profile: # gauntlt-1.0.10/lib/gauntlt/attack_adapters/gauntlt.rb:9

| name | value |

| url | http://scanme.nmap.org |

When I launch an "arachni" attack with: # gauntlt-1.0.10/lib/gauntlt/attack_adapters/arachni.rb:5

"""

arachni --checks=xss --scope-directory-depth-limit=1

"""

Then the output should contain "0 issues were detected." # aruba-0.5.4/lib/aruba/cucumber.rb:131

1 scenario (1 passed)

4 steps (4 passed)

0m1.538s

Now you can take this container and put it into your software build pipeline. I run this in Jenkins and it works great. Once you get this running and have confidence in the testing, you can start adding additional Gauntlt attacks. Ping me if you need some help getting this running or if you have suggestions to make it better at: james@gauntlt.org.

Summary

If you have installed a ruby app, you know the process of managing dependencies–it works fine until it doesn’t. Using a docker container to keep all the dependencies in one spot makes a ton of sense. Not having to alter your flow and resorting to VMs makes even more sense. I hope that through this example of running Gauntlt in a container you can see how easy it is to abstract an app and run it almost like you would on the command line – keeping its dependencies separate from the code you’re building and other software on the box, but accessible enough to use as if it were just an installed piece of software itself.

Refs

1. Thanks to my buddy Marcus Barczak for the docker tip on entrypoint.

This article is part of our Docker and the Future of Configuration Management blog roundup. If you have an opinion or experience on the topic you can contribute as well!