A recent blog post on DevOps by the IT Skeptic entitled DevOps and traditional ITSM – why DevOps won’t change the world anytime soon got the community a’frothing. And sure, the article is a little simmered in anti-agile hate speech (apparently the Agilistias and cloud hypesters and cowboys are behind the whole DevOps thing and are leering at his wife and daughter and dropping his property values to boot) but I believe his critiques are in general very perceptive and that they are areas we, the DevOps movement, should work on.

Go read the article – it’s really long so I won’t sum the whole thing up here.

Here’s the most germane critiques and what we need to do about them. He also has some poor and irrelevant or misguided critiques, but why would I waste time on those? Let’s take and action on the good stuff that can make DevOps better!

Lack of a coherent definition

This is a very good point. I went to the first meeting of an Austin DevOps SIG lately and was treated to the usual debate about “the definition of DevOps” and all the varied viewpoints going into that. We need to emerge more of a structured definition that either includes and organizes or excludes the various memetic threads. It’s been done with Agile, and we can do it too. My imperfect definition of DevOps on this site tries to clarify this by showing there are different levels (principles, methods, and practices) that different thoughts about DevOps slot into.

Worry about cowboys

This is a valid concern, and one I share. Here at NI, back in the day programmers had production passwords, and they got taken away for real good reasons. “Oh, let’s just give the programmers pagers and the root password” is not a responsible interpretation of DevOps but it’s one I’ve heard bandied about; it’s based on a false belief that as long as you have “really smart” developers they’ll never jack everything up.

Real DevOps shops that are uptaking practices that could be risky, like continuous deployment, are doing it with extreme levels of safeguard put into place (automated testing, etc.). This is similar to the overall problem in agile – some people say “agile? Great! I’ll code at random,” whereas really you need to have a very high percentage of unit test coverage. And sure, when you confront people with this they say “Oh, sure, you need that” but there is very little constructive discussion or tooling around it. How exactly do I build a good systems + app code integration/smoke test rig? “Uh you could write a bunch of code hooked to Hudson…” This should be one of the most discussed and best understood parts of the chain, not one of the least, to do DevOps responsibly.

We’re writing our own framework for this right now – James is doing it in Ruby, it’s called Sparta, and devs (and system folks) provide test chunks that the framework runs and times in an automated fashion. It’s not a well solved problem (and the big-dollar products that claim to do test automation are nightmares and not really automated in the “devs easily contribute tests to integrate into a continuous deploy” sense.

Team size

Working at a large corporation, I also share his concern about people’s cunning DevOps schemes that don’t scale past a 12 person company. “We’ll just hire 7 of the best and brightest and they’ll do everything, and be all crossfunctional, and write code and test and do systems and ops and write UIs and everything!” is only a legit plan for about 10 little hot VC funded Web 2.0 companies out there. The rest of us have to scale, and doing things right means some specialization and risks siloization.

For example, performance testing. When we had all our developers do their own performance testing, the limit of the sophistication of those tests was “I’ll run 1000 hits against it and time how long it takes to finish. There, 4 seconds. Done, that’s my performance testing!” The only people who think Ops, QA, etc. are such minor skill sets that someone can just do them all is someone who is frankly ignorant of those fields. Oh, P.S. The NoOps guys fall into this category, please don’t link them to DevOps.

We have struggled with this. We’ve had to work out what testing our devs do versus how we closely align with external test teams. Same with security, performace, etc. The answer is not to completely generalize or completely silo – Yahoo! had a great model with their performance team, where their is a central team of super-experts but there are also embedded folks on each product team.

Hiring people

Very related to the previous point – again unless you’re one of the 10 hottest Web 2.0 plays and you can really get the best of the best, you are needing to staff your organization with random folks who graduated from UT with a B average. You have to have and manage tiers as well as silos – some folks are only ready to be “level 1 support” and aren’t going to be reading some dev’s Java code.

Traditional organizations and those following ITIL very closely can definitely create structures that promote bad silos and bad tiering. But just assuming everyone will be of the same (high) skill level and be able to know everything is a fallacy that is easy to fall into, since it’s those sort of elite individuals who are the leading uptakers of DevOps. Maybe Gene Kim’s book he’s working on (“Visible DevOps” or similar) will help with that.

Tools fixation

Definitely an issue. An enhanced focus on automation is valuable. Too many ops shops still just do the same crap by hand day after day, and should be challenged to automate and use tools. But a lot of the DevOps discussions do become “cool tool litanies” and that’s cart before the horse. In my terminology, you don’t want to drive the principles out of the practices and methods – tooling is great but it should serve the goals.

We had that problem on our team. I had to talk to our Ops team and say “Hey, why are we doing all these tool implementations? What overall goal are they serving? ” Tools for the sake of tools are worse than pointless.

Process

It is true that with agile and with DevOps that some folks are using it as an excuse to toss out process. It should simply be a different kind of process! And you need to take into account all the stuff that should be in there.

A great example is Michael Howard et al. at Microsoft with their Security Development Lifecycle. The first version of it was waterfall. But now they’ve revamped it to have an agile security development lifecycle, so you know when to do your threat modeling etc.

Build instead of buy

Well, there are definitely some open source zealots involved with most movements that have any sysadmins involved. We would like to buy instead of build, but the existing tools tend to either not solve today’s problems or have poor ROI.

In IT, we implemented some “ITIL compliant” HP tools for problem tracking, service desk, and software deployment. They suck, and are very rigid, and cost a lot of money, and required as much if not more implementation time than writing something from scratch that actually addressed our specific requirements. And in general that’s been everyone’s experience. The Ops world has learned to fear the HP/IBM/CA/etc systems management suites because it’s just one of those niches that is expensive and bad (like medical or legal software).

But having said that, we buy when we can! Splunk gave us a lot more than cobbling together our own open source thing. Cloudkick did too. Sure, we tend to buy SaaS a lot more than on prem software now because of the velocity that gives us, but I agree that you need to analyze the hidden costs of building as part of a build/buy – you just need to also see the hidden costs and compromised benefits of a buy.

Risk Control

This simply goes back to the cowboy concern. It’s clearly shown that if you structure your process correctly, with the right testing and signoff gates, then agile/devops/rapid deploys are less risky.

We came to this conclusion independently as well. In IT, we ran (still do) these Web go lives once a month. Our Web site consists of 200+ applications and we have 70 or so programmers, 7 Web ops, a whole Infrastructure department, a host of third party stuff (Oracle and many more)… Every release plan was 100 lines long and the process of planning them and executing on them was horrific. The system gets complex enough, both technically and organizationally, that rollbacks + dependencies + whatnot simply turn into paralysis, and you have to roll stuff out to make money. When the IT apps director suggested “This is too painful – we should just do these quarterly instead, and tell the business they get to wait 2 more months to make their money,” the light went on in my mind. Slower and more rigorous is actually worse. It’s not more efficient to put all the product you’re shipping for the month onto a huge ass warehouse on the back of a giant truck and drive it around doing deliveries, either; this should be obvious in retrospect. Distribution is a form of risk management. “All the eggs in one big basket that we’ll do all at one time” is the antithesis of that.

The Future

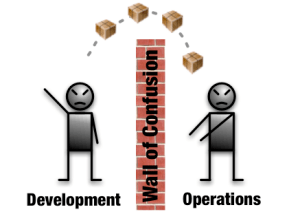

We started DevOps here at NI from the operations guys. We’d been struggling for years to get the programmers to take production responsibility for their apps. We had struggled to get them access to their own logs, do their own deploys (to dev and test), let business users input Apache redirects into a Web UI rather than have us do it… We developed a whole process, the Systems Development Framework, that we used to engage with dev teams and make sure all the performance, reliability, security, manageability, provisioning, etc. stuff was getting taken care of… But it just wasn’t as successful as we felt like it could be. Realizing that a more integrated model was possible, we realized success was actually an option. Ask most sysadmin shows if they think success is actually a possible outcome of their work, and you’ll get a lot of hedging kinds of “well success is not getting ruined today” kinds of responses.

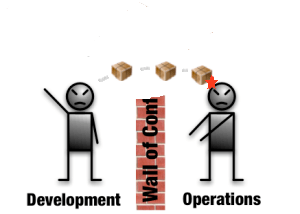

By combining ops and devs onto one team, by embedding ops expertise onto other dev teams, by moving to using the same tools and tracking systems between devs and ops, and striving for profound automation and self service, we’ve achieved a super high level of throughput within a large organization. We have challenges (mostly when management decides to totally change track on a product, sigh) but from having done it both ways – OMG it’s a lot better. Everything has challenges and risks and there definitely needs to to be some “big boy” compatible thinking on DevOps – but it’s like anything else, those who adopt early will reap the rewards and get competitive advantage on the others. And that’s why we’re all in. We can wait till it’s all worked out and drool-proof, but that’s a better fit for companies that don’t actually have to produce/achieve any more (government orgs, people with more money than God like oil and insurance…).