This came up today at work and I realized that over my now-decades of cloud engineering, I have developed a very specific way of using tags that sets both infra dev teams and SRE teams up for success, and I wanted to share it.

Who cares about tags? I do. They are the only persistent source of information you can trust (as much as you can trust anything in this fallen world) to communicate information about an infrastructure asset beyond what the cloud or virtualization fabric it’s running in knows. You may have a terraform state, you may have a database or etcd or something that knows what things are – but those systems can go down or get corrupted. Tags are the one thing that if someone can see the infrastructure – via console or CLI or API or integrated tool – that they can always see. Server names are notoriously unreliable – ideally in a modern infrastructure you don’t reuse servers from one task to another or put multiple workloads on one, but that’s a historical practice that pops up all to often, and server names have character limits (even if they don’t, the management systems around them usually enforce one).

Many powerful tools like Datadog work by exclusively relying on tags. It simplifies operation and prevents errors if, when you add a new production app server, that automatically gets pulled into the right monitoring dashboards and alerting schemes because it is tagged right.

I’ve run very large complex cloud environments using this scheme as the primary means to drive operations.

Top level tag rules:

- Tag everything. Tagging’s not just for servers. Every cloud element that can take a tag, tag. Network, disk images, snapshots, lambdas, cloud services, weird little cloud widgets (“S3 VPC endpoint!”).

- Use uniform tags. It’s best to specify “all lower case, no spaces” and so on. If people decide to word a tag slightly differently in two places, the value is lost. Both the key and the value, but especially the key – teach people that if you say “owner” that means “owner” not “Owner” and “owning party” and whatever else.

- Don’t overtag with attributes you can easily see. Instance size, what AZ it’s in, and so on is already part of the cloud metadata so it’s inefficient to add tags for it.

- Use standard tags. This is what I’ll cover in the rest of this article.

At the risk of oversimplifying, you need two things out of your systems environment – compliance and management. And tags are a great way to get it.

Compliance

Attribution! Cost! Security! You need to know where infrastructure came from, who owns it, who’s paying for it, and if it’s even supposed to be there in the first place.

Who owns it?

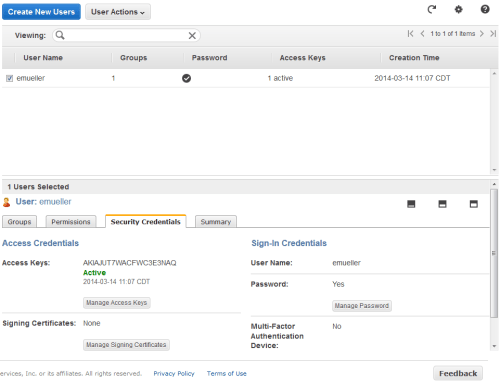

Tag all cloud assets with an owner (email address) basically whatever is required to uniquely identify who owns an asset. Should be a team email for persistent assets, if it’s a personal email then the assumption should be if that person leaves the company those assets get deleted (good for sandboxes etc).

The amount of highly paid engineer time I’ve seen wasted over the last decade of people having to go out and do cattle calls of “Hey who owns these… we need to turn some off for cost or patch them for security or explain them for compliance… No really, who owns these…” is shocking.

owner:myteam@mycompany.com

Who’s paying for it

This varies but it’s important. “Owner” might not be sufficient in an environment – often some kind of cost allocation code is required based on how your company does finances. Is it a centralized expense or does it get allocated to a client? Is it a production or development expense, those are often handled differently from a finance perspective. At scale you may need a several-parter – in my current consulting job there’s a contract number but also a specific cost code inside that contract number that we need all expenses divvied up between.

billing:CUCT30001

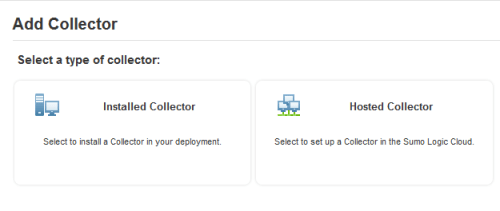

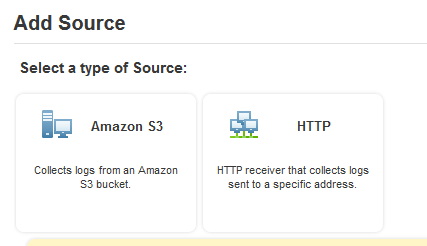

Where did it come from

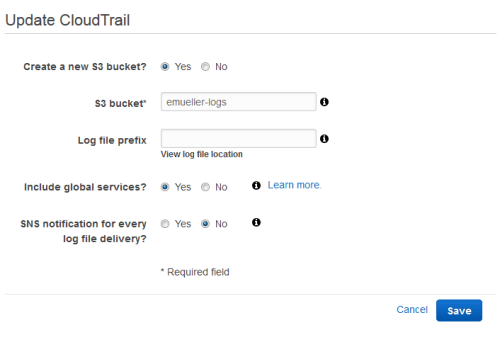

Traceability both “up” and “down” the chain. When you go look at a random cloud instance, even if you know who it belongs to you can’t tell how it got there. Was it created by Terraform? If so where’s the state file? Was it created via some other automation system you have? Github? Rundeck? Custom python app #25?

Some tools like Cloudformation do this automatically. Otherwise, consider adding a source tag or set of tags with sufficient information to trace the live system back to the automation. Developers love tagging git commits and branches with versions and JIRA tickets and release dates and such, same concept applies here. Different things make sense depending on your tech stack – if you GitOps everything then the source might be a specific build, or you want to say which s3 bucket your tfstate is in… Here as an example, I’m working with a system that is terraform instantiated from a gitops pipeline so I’ve made a source tag that says github and then the repo name and then the action name. And for the tfstate I have it saved in an s3 bucket named “mystatebucket.”

source:github/myapp/deploy-action

sourcestate:s3/mystatebucket

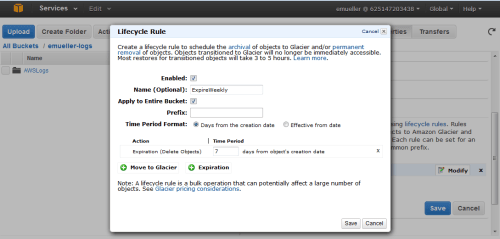

When does it go

OK, I know the last two sound like the lyrics to “Cotton-Eyed Joe”, which is a bonus. But a major source of cost creep is infrastructure that was intended to be there for a short time – a demo, a dev cycle – that ends up just living forever. And sure, you can just send nag-o-grams to the owner list, but it’s better to tag systems with an expires tag in date format (ideally YYYY-MM-DD-HH-MM as God intended). “expires:never” is acceptable for production infrastructure, though I’ve even used it on autoscaling prod infrastructure to make sure systems get turned over and don’t live too long.

expires:2025-02-01-00-00-00

or

expires:never

Management

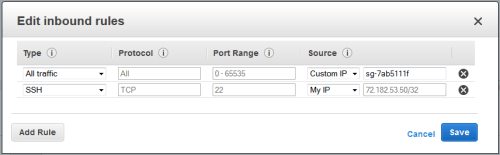

Operations! Incidents! Cost and security again! Keep the entire operational cycle, including “during bad production incidents”, in mind when designing tags. People tear down stacks/clusters, or go into the console and “kill servers”, and accidentally leave other infrastructure – you need to be able to identify and clean up orphaned assets. Hackers get your AWS key and spin up a huge volume of bitcoin miners. Identifying and actioning on infrastructure accurately and efficiently is the goal.

As in any healthy system, the “compliance” tags above aren’t just useful to the beancounters, they’re helpful to you as a cloud engineer or SRE. But beyond that, you want a taxonomy of your systems to use to manage them by grouping operations, monitoring, and so on.

This scheme may differ based on your system’s needs, but I’ve found a general formula that fits in most cases I come across. Again, it assumes virtual systems where servers have one purpose – that’s modern best practice. “Sharing is the devil.”

EARFI

I like to pronounce this “errr-feee.” It’s a hierarchy to group your systems.

- environment – What environment does this represent to you, e.g. dev, test, production, as this is usually the primary element of concern to an operator. “environment:uat” vs “environment:prod”.

- application – What application or system is this hosting? The online banking app? The reporting system? The security monitoring server? The mobile game backend? GenAI training? “application:banking”.

- role – What function does this specific server perform? Webserver dbserver, appserver, kafka – systems in an identical role should have identical loadouts. “role:apiserver” vs “role:dbserver”. Keep in mind this is a hierarchy and you won’t have guaranteed uniqueness across it – for example, “application:banking,role:dbserver” may be quite different from “application:mobilegame,role:dbserver” so you would usually never refer to just “role:dbserver.”

- flavor – Optional, but useful in case you need to differentiate something special in your org that is a primary lever of operation (Windows vs Linux? CPU vs GPU nodes in the same k8s cluster? v2 vs v2?). I usually find there’s only one of these (besides of course region and things you shouldn’t tag because they are in other metadata). For our apiserver example, consider that maybe we have the same code running on all our api servers but via load balancer we send REST queries to one set and SOAP queries to another set for caching and performance reasons. “flavor:rest” vs “flavor:soap”.

- instance – A unique identifier among identical boxes in a specific EARF set, most commonly just an integer. “instance:2”. You could use a GUID if you really need it but that’s a pain to type for an operator.

This then allows you to target specific groups of your infrastructure, down to a single element or up to entire products.

- “Run this week’s security patches on all the environment:uat, application:banking, role:apiserver, flavor:rest servers.” Once you verify, you can do the same on environment:prod.”

- “The second of the three servers in that autoscaling group is locked up. Terminate environment:uat, application:banking, role:apiserver, flavor:rest, instance:2“

- “We seem to be having memory problems on the apiservers. Is it one or all of the boxes? Check the average of environment:prod, application:banking, role:apiserver, flavor:rest and then also show it broken down by instance tag. It’s high on just some of the servers but not all? Try flavor:rest vs flavor:soap to see if it’s dependent on that functionality. Is it load do you think? Compare to the aggregate of environment:uat to see if it’s the same in an idle system.”

- “Set up an alert for any environment:prod server that goes down. And one for any environment:prod, application:banking, role:apiserver that throws 500 errors.”

- “Security demands we check all our DB servers for a new vulnerability. Try sending this curl payload to all role:dbservers, doesn’t matter what application. They say it won’t hurt anything but do it to environment:uat before environment:prod for safety.”

So now a random new operator gets an alert about a system outage and logs into the AWS console and sees not just “i-123456 started 2 days ago,” they see

owner:myteam@mycompany.com

billing:CUCT30001

source:github/myapp/deploy-action

sourcestate:s3/mystatebucket

expires:never

environment:prod

application:mobilegame

role:dbserver

flavor:read-only

instance:2

That operator now has a huge amount of information to contextualize their work, that at best they’d have to go look up in docs or systems and at worst they’d have to just start serially spamming. They know who owns it, what generates it, what it does and has hints at how important it is. (prod – probably important. A duplicate read secondary – could be worse.) And then runbooks can be very crisp about what to do in what situation by also using the tags. “If the server is environment:prod then you must initiate an incident <here>… If the server is a role:dbserver and a role:read-only it is OK to terminate it and bring up a new one but then you have to go run runbook <X> and run job <y> to set it up as a read secondary…”

Feel free and let me know how you use tags and what you can’t live without!